We have started a search for all non-English editions of Robert Pirsig’s books. That is:

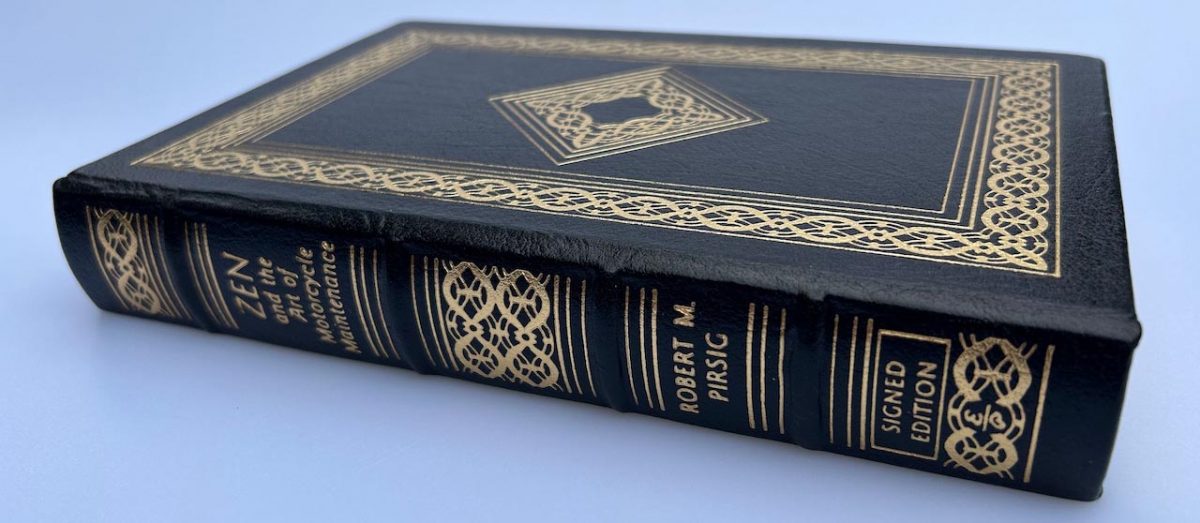

- Zen and the Art of Motorcycle Maintenance

- Lila: An Inquiry into Morals

The process is as follows:

- Check this spreadsheet to see if your non-English edition is already listed

- Use this form to add to the spreadsheet

We are interested in all editions. Meaning that a certain publisher might have released multiple editions over the years. Each edition should be added. In 2024, for example, two new English editions were published.

Editions show significant differences in terms of design and content. An edition might, for example, feature a new cover and foreword. In most cases, a new edition also has a new International Standard Book Number (ISBN), but this is not always the case. The original 1974 edition of Zen and the Art of Motorcycle Maintenance, for example, shares its ISBN number with its 25th anniversary edition published in 1999.

Please add as much information as you can. It will help us to find an catalog them.